what the typing class was for

a page on a skill we spent thirty years teaching that might not matter anymore. sit with it. 10 min.

what’s playing: slow burn awareness — mereba, odeal, tyla, barry can't swim.

saturday afternoon. airport. gate c14. flight back to chicago.

i’m the one not looking at my screen.

everyone else is. laptops open. headphones in. fingers moving. doing things. processing. navigating menus, clicking through tabs, composing emails, filling out forms. the ordinary machinery of a workday that followed them to the terminal.

i’m sitting here watching them and wondering. not judging. wondering. what they’re afraid of. what they’re hoping for. how they’re using ai today. whether they feel the ground shifting underneath all that clicking.

earlier this week, on st. paddy’s day, i showed up to a hackathon in sweats. two hours to build something with a team i’d never met. i could do everything. i knew how to break the problem into pieces. give each piece to the right machine. get something back. i tried to delegate. my teammates stalled. not because they weren’t smart. they were. but they sat in front of the same interface i was using and came up empty. they were asking the machine what to do. i was telling it what i needed.

we didn’t get to pitch. i wanted the room to see what we’d built. how. so they could learn.

the outcome-thinker didn’t get the outcome. that’s worth holding too.

small room. big room. same question.

i remember when they taught us how to type.

what is actually happening.

the word “computer” first appeared in 1613. it meant a person.

someone employed to perform calculations. following fixed rules. processing information with precision. no authority to deviate from the instructions given. for three hundred years, a computer was a human being doing what we now describe machines doing.

you can look them up. katherine johnson. who computed orbital trajectories by hand for nasa’s early missions. the women at harvard’s observatory who catalogued hundreds of thousands of stars. not seen as scientists, but as computers. the women of langley. hired because the job required patience and precision. not because anyone thought they were building careers.

then in 1945, the machines arrived. the word migrated from the person to the machine. the humans who programmed it were recruited from the same pool they were replacing. they knew the work. they just had to teach the machine to do it.

and then we decided everyone needed to learn to talk to the machine.

home row. ctrl+c. file → save as. thirty years of mandatory curriculum built around a single premise. to be useful in the modern economy, you must learn to operate the machine.

what we were really teaching was translation. how to encode human intent into machine language. computer literacy wasn’t about computers. it was about learning to be the bridge between what you wanted and what the machine could do.

thirty years of humans learning to be used by machines well.

three things changed that.

the first was chatgpt. november 2022. made the machine readable. but it got things wrong and required you to catch the mistakes. you had to bring the agency. the skill was still yours.

the second was reasoning. september 2024. the machine started checking itself. the burden of verification started lifting. you were still in the loop. but the loop was getting smaller.

the third is what most people haven’t registered yet. computer use.

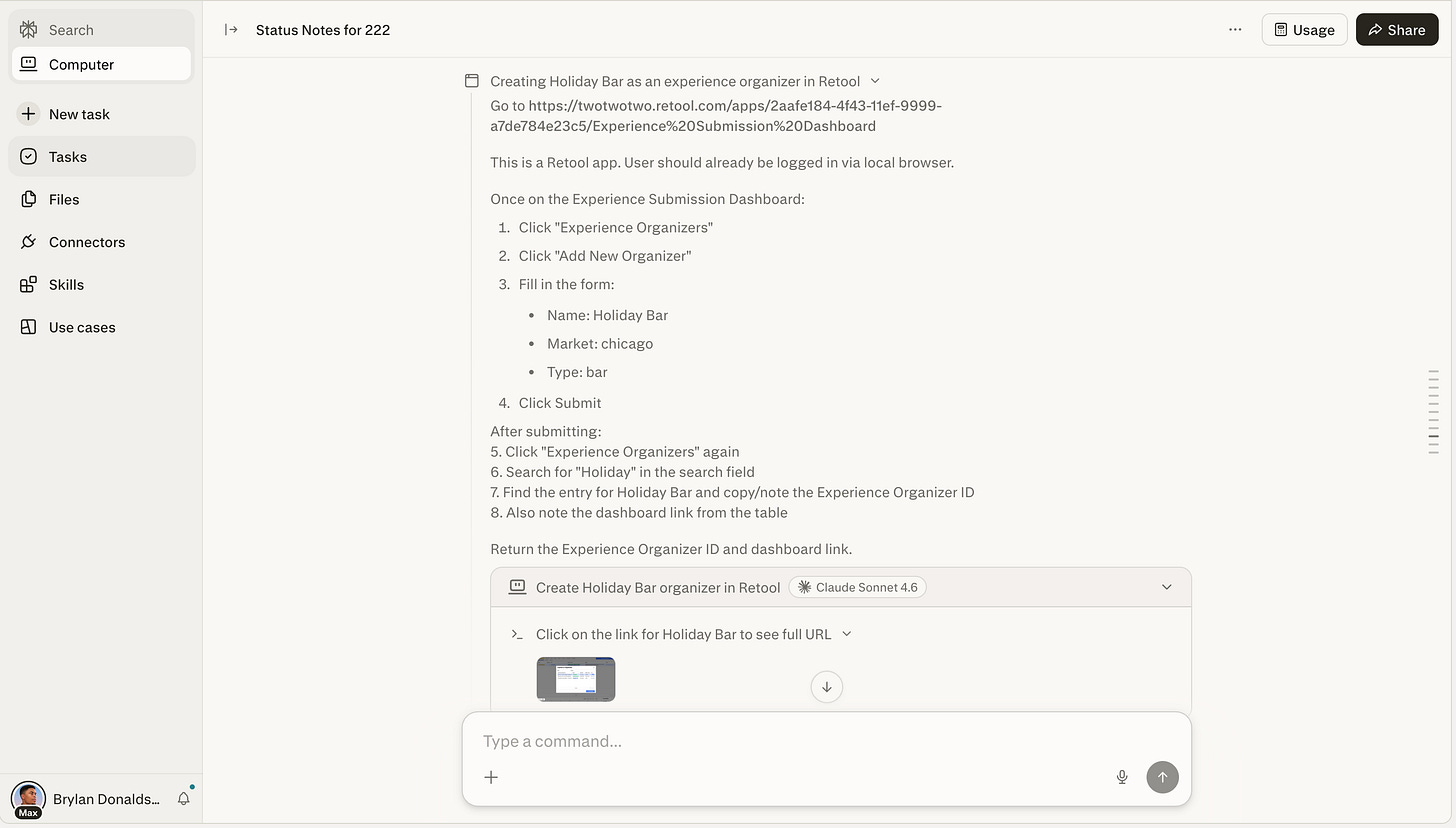

sometime in late 2025, ai learned to use a computer the way you do. open a browser. navigate to a site. read what’s on the screen. click the button. fill out the form. send the email. not by being told each step. by being given an outcome and figuring out the path itself.

anthropic moved first. claude cowork. a desktop agent that lives on your computer, accesses your files, controls your browser, executes tasks autonomously. you describe what needs to happen. it makes a plan and works through it. it felt less like a tool and more like leaving messages for someone who actually does the work.

openclaw followed. an independent developer built an open source agent that runs on your own hardware. accessible through whatsapp, telegram, imessage. and can control your browser, manage your files, and keep working while you’re away from your desk. in three weeks it became the most downloaded open source project in github history, surpassing thirty years of linux adoption. jensen called it “probably the single most important release of software, probably ever.”

then perplexity launched computer. nineteen specialized frontier models orchestrated automatically. you describe an outcome. the system routes each piece to the right model and runs them in parallel. for hours or months. all while you do other things.

three products. one direction. the machine does the clicking now.

people don’t just want smarter answers. they want something that acts.

the computer learned to use itself.

most people are already living in the gap between what the economy demands and what they can do.

the oecd found that only five percent of adults score at the highest level of computer proficiency. a quarter can’t use one at all. and ninety-two percent of jobs require digital skills.

computer use either closes that gap or makes it permanent.

what i noticed.

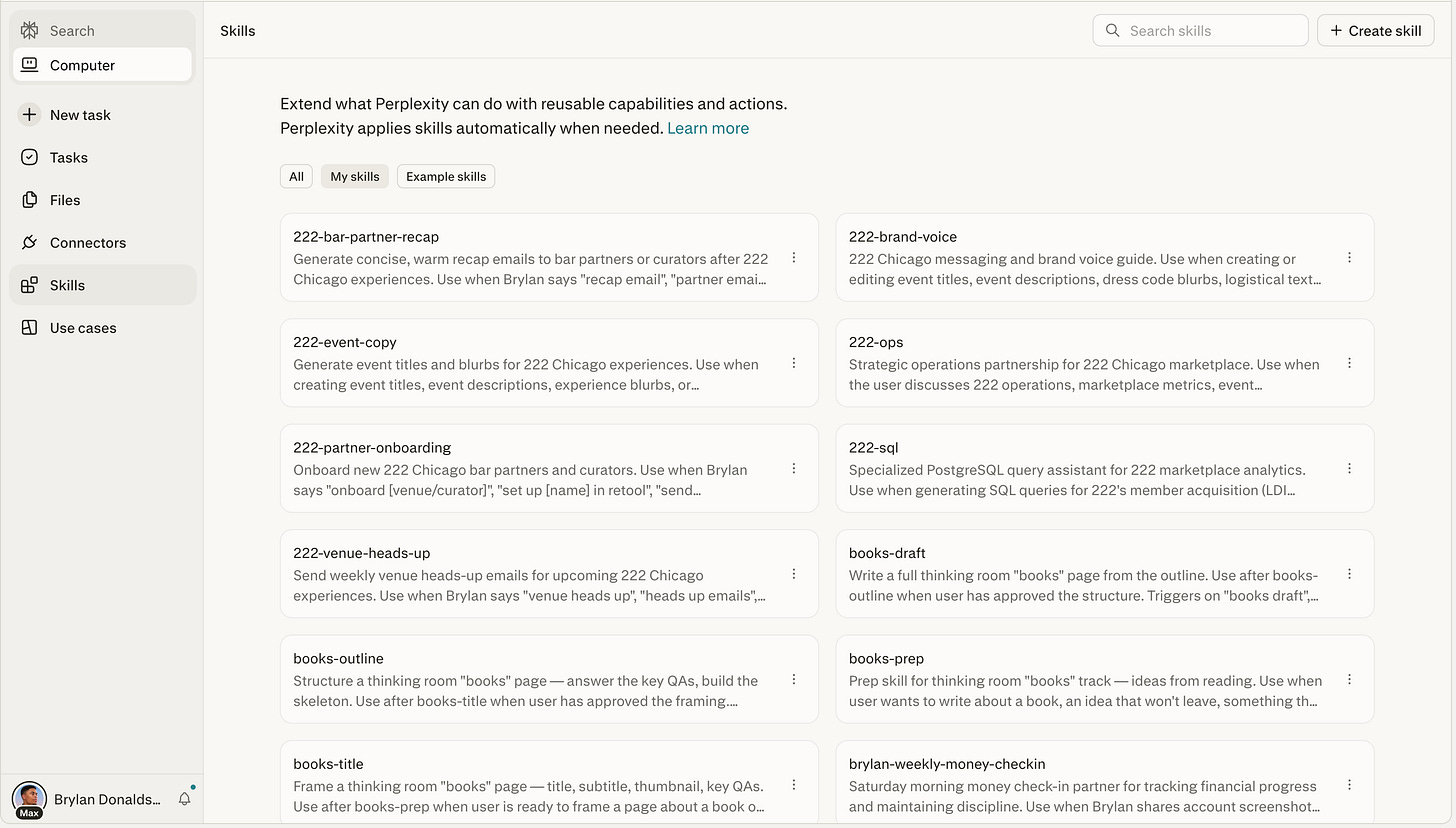

this week i built seventeen skills. sets of instructions that tell the machine what to do when i show up with what i want. the machine already knows its job.

for example, i onboarded partners in atlanta. this requires creating the partner in the system, setting up dashboards, drafting the emails, looking up contacts. a task that requires twelve steps. and back and forth across multiple tabs. this is just one example of where i provided the context. the machine handled the execution.

you’re the context. the machine is doing the clicking.

the gap i saw at the hackathon wasn’t technical. my teammates weren’t less intelligent. they were thinking in tasks. asking the machine what to do. i was thinking in outcomes. telling it what i needed. that difference, right now, is the whole ballgame.

but here’s what i keep sitting with.

three out of four workers who try ai tools abandon them mid-task. not because the tool failed. because they couldn’t tell if it succeeded. the adoption gap isn’t capability. it’s trust. and trust requires being able to check the work.

i think about my brother. a lot, this week.

he’s smart. genuinely smart. but the digital world was never built for how he thinks. he struggles with the basics. not because he can’t learn, but because the basics were always arbitrary. copy-paste. right-click versus left-click. where the file went after you downloaded it. why the same button does different things in different apps. he’d call me and i could hear the frustration. not “i’m stupid” frustration, but “why does this have to be this hard” frustration. and he was right to ask. it shouldn’t have been.

for thirty years, we built interfaces that demanded you memorize their language. ctrl+c on windows, command+c on mac. file → save as. navigate to the folder. name it something you’ll remember. millions of small translations between what you meant and what the machine required. my brother isn’t bad at computers. he just never accepted the terms of the translation. and nobody told him that was a valid response.

ai doesn’t automatically fix that for him. in some ways it makes it worse. the expectation shifts. if the computer can use itself, the question becomes, can you at least confirm it did it right? computer use hands you leverage you didn’t have before. but only if you know what good looks like on the other side. my brother has never been given the tools to know what good looks like. not because he can’t. because no one designed that bridge for him.

the gap doesn’t close for him. it widens.

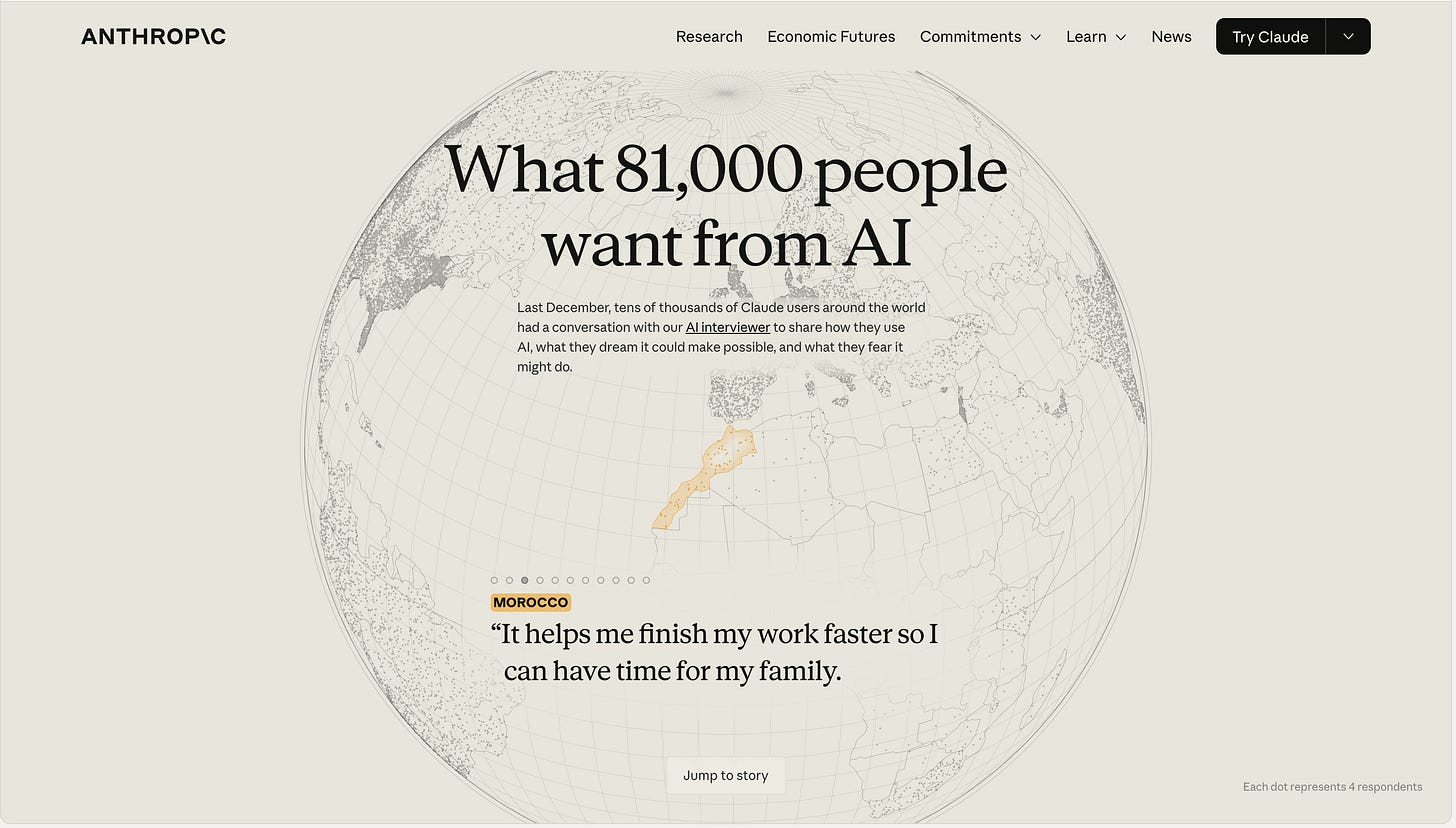

and he’s not alone. anthropic interviewed eighty-one thousand people across a hundred and fifty-nine countries. what people asked for most wasn’t speed. it wasn’t efficiency. it was presence. something that actually understands their situation. the people who need ai most aren’t asking for a faster tool. they’re asking for a kinder interface. one that meets them where they are.

watching the agent work this week, i realized what digital work actually is. not thinking. navigating. clicking. formatting. switching apps. hundreds of micro-decisions per task. the agent absorbs all of them.

but here’s the question i haven’t resolved. if reasoning models already check themselves and orchestration systems verify across multiple models, then verification itself might be the next thing that gets automated. my brother’s gap might not be permanent. it might be an interface failure that the next generation of tools actually solves. maybe the right agent doesn’t ask him to verify. it asks him what he wants and confirms it got there. maybe the bridge isn’t literacy anymore. maybe it’s design.

i don’t know. i want that to be true. i’m not sure it will be. but i’m not willing to assume my brother’s gap is forever when the interfaces that created it are the very thing being replaced.

how i’m seeing it.

cowork made a specific bet. the value is in knowing your context. your files. your folders. your workflows. built into your machine. perplexity made the opposite bet. the value is in knowing which model to use. nineteen models, routed automatically, each best in class at a specific kind of work. they’re playing the orchestra, not the instrument.

both bets are live. but the direction underneath both is the same. the model is becoming invisible. the interface that deploys it is what people experience.

openclaw proved the demand was real before both of them. three weeks to surpass linux’s thirty-year adoption curve. jensen at gtc: “every company in the world today needs to have an openclaw strategy. this is the new computer.” and his trillion dollar revenue forecast is arithmetic, not ambition. by nvidia’s estimate, every agentic task consumes around a thousand times more tokens than a single prompt, and the number of people handing outcomes to machines instead of clicking themselves is just getting started. we’re at an inflection where “the last prompt was queries. this prompt is actions. do something for me.”

the market is still largely pricing the model as the moat. but last january, two models held ninety percent of enterprise usage. by december, no single model held more than twenty-three percent. meta paid two billion for manus. not for a foundation model, for the layer that tells the computer what to do. apple built the platform everyone is running this on. they just haven’t claimed what it’s becoming yet.

the value isn’t in building the smartest model anymore. it’s in building the thing that knows when to use which one.

when the economy tightens, the jobs that go first are built on execution. the clicking, the navigating, the following of fixed rules. the same jobs thirty years of typing classes prepared people for. with hormuz closed and costs climbing, companies aren’t waiting for the comfortable moment. the war created the cover. computer use was already waiting. the timing isn’t coincidental. it’s compressive.

what survives is knowing what you want. and knowing how to tell if you got it. the typing class taught you to operate the machine. computer use means the machine operates itself. that’s what the typing class was for. and for most people, it turns out it was preparation for something that no longer needs them to show up.

some lingering thoughts.

the typing class was mandatory. someone decided the skill mattered enough to require. nobody is requiring the next one. what does it mean that the most important literacy of our time is opt-in?

when your manager can hand an outcome to an agent instead of delegating it to you, what’s left of the entry-level job? where do people learn judgment if they never get to do the work that builds it?

if the value moves from knowing how to use the tool to knowing what you want from it. what happens to the people who were never taught to want clearly? who were taught to follow instructions, not give them?

my brother’s boss sees him as slow. what happens when the boss gets an agent that’s fast and decides the gap between them is no longer worth closing?

being early and being wrong feel the same from the inside. what am i not seeing?

the read: agents over bubbles — ben thompson — the clearest framework i found this week for why computer use changes the economics of everything above the GPU.

the product: perplexity computer — don’t just read about it. understand how it works. this is what the shift looks like when it’s a product.

the rabbit hole: letter 8 — global hub theory — kp — went against every narrative i was holding. left me asking where i need to revisit my assumptions.

the question i can’t shake: what happens to the people on both sides of the computer literacy divide when the computer starts using itself?

the people who built careers on the translation. using excel. powerpoint. navigating the browser. formatting the spreadsheet. sending that follow-up. the machine can do all of that now. the people who never learned. the computer was supposed to be their way up. now it’s doing the clicking.

both sides lose something when the computer starts using itself. but maybe the same shift that makes the skill obsolete also makes the interface kinder. maybe the bridge my brother never got is the one being built right now.

what grows in that space is still being decided.

they taught us to use computers. nobody taught us what to do when computers use themselves.

that’s the part we figure out now.

— b.